Creativity and Innovation at the Intersection of AI, Computer Graphics, and Design

At SIGGRAPH 2024, multi-institutional research teams to showcase their novel work bringing digital characters and environments to life.

DENVER, June 27, 2024 /PRNewswire/ — In the expansive field of computer graphics and interactive techniques, the integration of artificial intelligence (AI) has had a major impact, bringing unprecedented realism and efficiency in the digital world. AI has significantly enhanced the capabilities of graphic rendering and animation. Today we are in a new era where generative AI and neural rendering are transforming 2D images into immersive 3D landscapes, and real-time simulations are achieving unprecedented visuals.

At SIGGRAPH 2024, which will be held 28 July–1 August in Denver, novel research — as part of the Technical Papers program — will demonstrate key advances that enable detailed and lifelike animations, once considered the realm of science fiction. This relationship between AI and computer graphics continues to evolve, signaling a future where the digital and real worlds seamlessly converge.

“The impact of generative AI on 3D graphics and interactive techniques is profound, poised to democratize content creation and lower barriers to entry in creative industries. Generative AI can facilitate real-time, personalized experiences in gaming, architecture, and industrial design, revolutionizing fields through procedural content generation and rapid prototyping,” Qixuan (QX) Zhang, project lead of research that will debut at SIGGRAPH 2024, says. QX and his collaborators will present CLAY, a large-scale 3D native generative model that accurately captures complex geometric features and generates realistic textures in a variety of objects.

“We anticipate a future where generative AI enhances productivity and creativity across professional domains, making advanced 3D content creation and interaction a part of everyday life for a global audience,” QX adds.

Following SIGGRAPH’s 50th conference milestone in 2023, the conference this year will showcase exciting new research fueling the future — advances developed from a core place of imagination and creativity.

As a preview of the Technical Papers program, here is a sampling of three novel methods and their unique approaches incorporating generative AI to the vast field.

Dreaming Up High-Quality, Realistic Images via Text Prompts

Creating high-quality appearances of objects is a critical task in computer graphics because they can significantly improve the realism of rendering in a variety of applications — movies, games, and AR/VR. An international team of researchers from Zhejiang University, Tencent Games-China, and Texas A&M University, have developed DreamMat, a novel text-to-appearance method that generates images consistent with their geometry and environment light. Central to the team’s framework is the geometry- and light-aware diffusion model that can avoid common problems of “baking in” shading effects that create unrealistic looking objects in a virtual environment.

The researchers set out to make this specific artistic task easier and faster with a tool for efficiently creating object appearances such that even novice users can do so with just simple text prompts. In this work, they fine tuned a new light-aware 2D diffusion model to condition on a given lighting environment and generate the shading results on this specific lighting condition. Then, by applying the same environment lighting in the material distillation, DreamMat can generate high-quality PBR (physically based rendering) materials that are not only consistent with the given geometry but also free from any unwanted and unrealistic shading effects.

“DreamMat has significantly lowered the barrier to creating PBR materials for untextured meshes (only using text prompts), enabling the generation of high-quality appearance that can be seamlessly integrated into games and films — a feat previously unattainable with earlier generative techniques,” Xiaogang Jin, lead author and a professor at the State Key Lab of CAD & CG at Zhejiang University, China, says. “The most exciting thing is witnessing the potential for genuine application of AI-generated 3D assets beyond mere display.”

DreamMat’s automatic creation of PBR materials can be imported directly into modern graphics engines such as Blender, GameMaker, or Unity for efficient rendering. In addition to Jin, the team comprises Yuqing Zhang and Zhiyu Xie at Zhejiang University; Yuan Liu from the University of Hong Kong and Tencent Games; Lei Yang, Zhongyuan Liu, Mengzhou Yang, Runze Zhang, Qilong Kou, and Cheng Lin at Tencent Games; and Wenping Wang at Texas A&M University, and they are slated to showcase their work at SIGGRAPH 2024. For more about DreamMat and a video, visit their page.

Transforming Realistic 3D Assets, Quickly and Efficiently

In just recent years, the world has witnessed rapid advancements in generative AI across various modalities — text, images, audio, and video. With the public release of ChatGPT and the like, a much wider audience now has a direct connection and firsthand experience with how AI works. But despite significant research achievements in the 3D computer graphics field, industrial adoption has lagged.

In a collaboration between ShanghaiTech University and Deemos Tech-China, researchers set out to better understand the limitations game developers and CG teams are facing with existing 3D generation approaches. What they learned is that the traditional “reconstruction” methods fall short of industry needs. True 3D generative AI “must go beyond mere reconstruction; it must comprehend user requirements, support multitasking, and offer customization options akin to the vibrant 2D generative AI community,” QX, a member of the ShanghaiTech-Deemos team that will be presenting their research at SIGGRAPH 2024, says.

The researchers have developed CLAY, a native 3D generative model with 1.5 billion parameters that accurately captures complex geometric features and generative realistic textures. Trained on a comprehensive 3D dataset, CLAY’s uniqueness, say the researchers, lies in its alignment with the specific needs of the 3D industry — usability being its primary focus and design ability to cater directly to practical applications.

Within a minute, CLAY can generate detailed 3D assets with PBR materials adaptable to various conditions, from text, image to 3D. Their approach denoises 3D data, and the researchers say that when compared to previous similar methods, CLAY generates detailed geometries in seconds while preserving essential geometric features, such as flat surfaces and structural integrity, of multiple objects.

“Looking ahead, we envision generative AI technologies in the 3D field liberating artists from repetitive tasks, allowing them to focus on creativity and innovation,” QX adds. “We aim to extend 3D generation technologies to everyday applications such as virtual try-ons for online shopping, enhanced creative in the metaverse, and novel gameplay experiences in gaming.”

Working with QX on the CLAY development are collaborators Longwen Zhang, Ziyu Wang, Qiwei Qiu, and Haoran Jiang from ShanghaiTech and Deemos; Anqi Pang, Lan Xu, and Jingyi Yu at ShanghaiTech; and Wei Yang at Huazhong University of Science and Technology. The team’s paper and video can be accessed here.

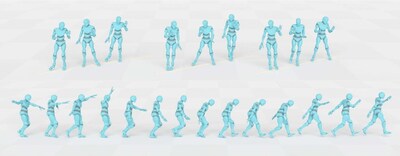

Effortless Character Control and Real-Time Diverse Movement

Real-time character control is the holy grail in computer animation, playing a pivotal role in creating immersive and interactive experiences. Despite significant advances in this area, real-time character control continues to present several key challenges. These include generating visually realistic motions of high quality and diversity, ensuring controllability of the generation, and attaining a delicate balance between computational efficiency and visual realism.

In gaming, for example, consider the non-player characters (NPCs) and how they have been limited to exhibiting monotonous movements due to their pre-programmed reliance on a limited set of animations. These characters often repeat the same motions, lacking individuality and realism.

Indeed, to achieve more dynamic characters carrying out realistic motions in the virtual world, a global team of computer scientists has created a novel computational framework that overcomes these key obstacles. The team, comprising researchers from the Hong Kong University of Science and Technology, the University of Hong Kong, and Tencent AI Lab-China, will present a new character control framework that effectively utilizes motion diffusion probabilistic models to generate high-quality and diverse character animations, responding in real-time to a variety of dynamic user-supplied control signals.

At the core of their method, say the researchers, is a transformer-based Conditional Autoregressive Motion Diffusion Model (CAMDM), which takes as input the character’s historical motion and can generate a range of diverse potential future motions, in real time. Their method can generate seamless transitions between different styles, even in cases where the transition data is absent from the dataset.

“With this new technique, we’re on the verge of witnessing a more realistic digital world. This world will be populated with thousands of digital humans empowered by our technique, each exhibiting unique features and diverse movements,” Xuelin Chen, project lead of the research and senior researcher at Tencent AI Lab, says. “In this immersive digital world, every digital human is an individual with distinct characteristics, and we can control our own unique virtual characters to navigate and interact with them.”

The team is currently working with industry to incorporate their technique into a video game product, with an expected launch date in late 2024. In future work, they intend to expand their method to be applied to a few key areas, including animation and film and to enhance virtual reality user experiences. At SIGGRAPH this year, Chen will be joined with co-authors and collaborators Rui Chen and Ping Tan from Hong Kong University of Science and Technology; Mingyi Shi and Taku Komura at University of Hong Kong; and Shaoli Huang, who is also with Tencent AI Lab. For the full paper and video, visit the team’s page.

This research is a small preview of the vast Technical Papers research to be shared at SIGGRAPH 2024. Visit the Technical Papers listing on the full program to discover more generative AI content and beyond.

About ACM, ACM SIGGRAPH, and SIGGRAPH 2024

ACM, the Association for Computing Machinery, is the world’s largest educational and scientific computing society, uniting educators, researchers, and professionals to inspire dialogue, share resources, and address the field’s challenges. ACM SIGGRAPH is a special interest group within ACM that serves as an interdisciplinary community for members in research, technology, and applications in computer graphics and interactive techniques. The SIGGRAPH conference is the world’s leading annual interdisciplinary educational experience showcasing the latest in computer graphics and interactive techniques. SIGGRAPH 2024, the 51st annual conference hosted by ACM SIGGRAPH, will take place live 28 July–1 August at the Colorado Convention Center, along with a virtual access option.

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/creativity-and-innovation-at-the-intersection-of-ai-computer-graphics-and-design-302185164.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/creativity-and-innovation-at-the-intersection-of-ai-computer-graphics-and-design-302185164.html

SOURCE SIGGRAPH